5 Things We Would Like to See Change in the Monitor Market

This article delves in to the current monitor market and considers things we would like to see more of and less of in the future from display manufacturers. You will see we feel passionately about some of these areas in our list, and it is just our honest view of certain areas that we feel could and should be improved for the good of the market, and ultimately for the good of the consumer. We hope that it will serve as an area for consideration from display manufacturers, on things they can work on and improve for their future displays. It’s not exhaustive, and we’ve refrain from doing “wish list” type things such as “I want x size display with x technology and x features”. This is more about improving general areas of specs and performance that are increasingly important in this market.

So without further ado, here’s our countdown of 5 things we’d like to see change in the monitor market list:

5. Better flexibility with sRGB emulation mode controls

- sRGB emulation mode should always be available on a wide gamut screen

- Brightness control is an absolute must

- Access to further controls like colour temp, RGB, gamma would be welcome

Nearly all new monitors released in today’s market have a wide colour gamut. Many people like this for gaming, HDR content and multimedia, giving a nice boost in colours and vividness. For those who are buying a screen just for those uses, they are often less worried about accuracy and more interested in an image that pops and looks vivid and saturated. If the colour space of the monitor is large enough it can also be useful if you want to work specifically in larger colour gamuts like Adobe RGB and DCI-P3 for instance. For many people though they will still want to be able to work accurately with standard gamut / sRGB / SDR content for general day to day, office and perhaps even photo work. We’ve talked in the past about the importance of a reliable and usable sRGB emulation mode, and this is often the only way for a typical consumer to be able to tame their wide gamut display if they need to, back to sRGB.

We have criticised displays in the past where an sRGB mode is not provided at all (e.g. the fairly recent Dell Alienware AW2721D and AW3821DW for example). As far as we’re concerned, every wide gamut display should include an sRGB emulation mode. If it doesn’t we penalise it in our reviews as will other sites. That applies to gaming displays as well, this should be a basic requirement for all wide gamut displays.

We have also criticised some displays where an sRGB mode is offered, but it has been entirely locked down by the manufacturer (e.g. the Philips 329P1H). This is particularly problematic if the brightness control is locked, as it gives the user no flexibility to adjust the brightness to their liking or ambient light conditions. Sometimes its locked at crazy settings that make the mode unusable as well. Again we will penalise any screen where the brightness control in the sRGB mode is unavailable. That is a basic must-have for any preset mode in our opinion.

The final point, and the thing we would like to see more of from future monitors, is access to more controls and configuration in this mode. It is very rare for the user to have access to many settings when using this mode, which makes it very hard if you wanted to adjust the colour temp, gamma, RGB channels etc. Sometimes you might even need to do this to correct a problem with the manufacturers setup, for instance if it is too warm or cool by default. So in those situations, not having access is a problem and can make the mode unusable in some cases.

We should put this in to context though, this may be more of a nice to have than an absolute requirement in our opinion in some cases. If the manufacturer has carried out a good factory calibration and achieved decent gamma and colour temp performance, then many users won’t even need to adjust things anyway. Having access to settings you won’t need isn’t of any value, and we certainly wouldn’t consider these modes “broken” just because they don’t offer the setting control. That I along as targets for gamma and white point/colour temp are met nicely. If it’s calibrated well then it’s not vital for the majority of users to need access to those further controls (but brightness is still a must-have). However, it still feels unnecessary to have these controls locked for those who might want to change things and for completeness. We’d like to see a mode that just caps the colour gamut to sRGB, but still allows full customisation of all the other options (like for example how it was done on the Asus ROG Swift PG32UQX).

4. Use curves in the appropriate way

- Save curves for large format ultrawide screens where they are useful

- Stick to flat format for smaller screens

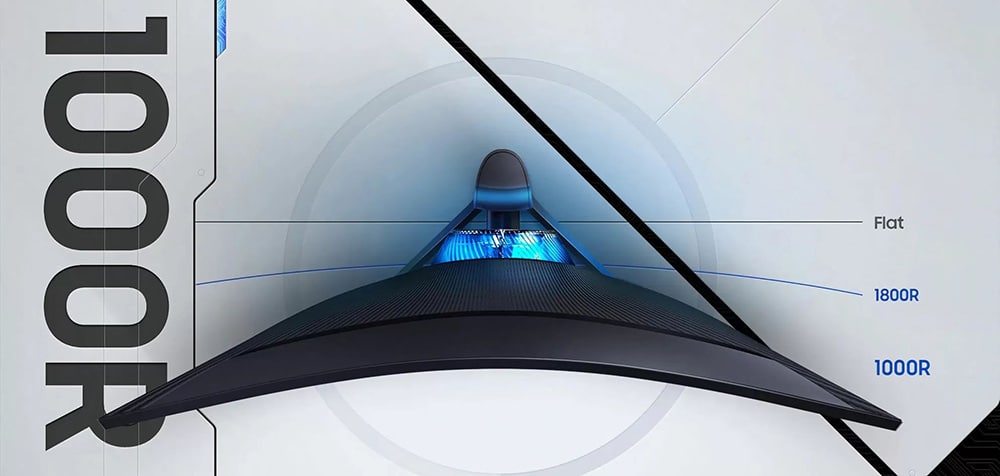

We’ve seen a large increase in the number of displays with a curved format in recent times, ranging from a fairly modest curve like 3000R or 2300R for instance, to far more aggressive curves like 1000R. We like curved screens for large format ultrawide panels, and these have their place for many users for 34”, 35” for instance. They can help to bring the wide edges in to a more comfortable viewing position and making the screen feel more natural. Even the aggressive curves like 1000R can be useful for screens like the Samsung Odyssey Neo G9 for instance which is a massive 49” ultrawide.

However, we don’t really like the curved format for smaller screens like 27 – 32”, in 16:9 format. Most people seem to prefer a flat screen in those sizes, and it’s a shame when the focus is so much on curves that people have to make do. The Samsung Odyssey G7 models were a good example. Excellent screens in 27” and 32” sizes, with 1440p resolution, fast VA response times (finally!), and 240Hz refresh rate. They were only available in a curved format though which for this sized screen is unnecessary we think and put many people off. It leads to problems with viewing angles and trying to use the screen from different positions. It makes any general usage a bit tricky to as straight lines no longer look straight. It was an aggressive 1000R curve used on those models as well, further compounding the issue.

We would much rather see curved format reserved for large ultrawide screens, instead of using it for the sake of it on smaller screens where it’s frankly unnecessary.

3. A single overdrive setting experience for all gaming monitors

- Make things simpler for users in today’s market of high refresh rates and VRR

- A single overdrive setting should be all you need to worry about for a gaming screen

- Invest in variable overdrive development or use NVIDIA G-sync more widely

In the modern market of high refresh rates, G-sync and FreeSync, it has becomes increasingly complex to evaluate a monitors performance and the response times. The reality is that response time behaviour is impacted by the active refresh rate of a screen in most cases, which can cause complications in VRR situations. You will see from our reviews that we test the G2G response time and overshoot performance at a range of refresh rates, and in a range of modes and monitor settings. Normally it’s easy to identify the optimal overdrive setting on the monitor when running at the maximum fixed refresh rate, but what about if that refresh rate is going to vary during usage due to VRR G-sync and FreeSync? What happens to that performance when the frame rate and refresh rate drop?

All to often we see a common problem, which to be fair applies normally to adaptive-sync (FreeSync) screens and not to Native NVIDIA G-sync screens (those with their hardware module) – we will talk about those in a moment:

The problem: As the refresh rate lowers the G2G response times stay static, but the overshoot levels increase.

This means that if your frame rate drops past a certain point, the overshoot becomes very distracting and problematic. This break point will vary, but somewhere within that VRR range it becomes too much of a problem. The only way to overcome this is to lower the overdrive setting on the monitor to something less aggressive that removes the overshoot. But that then has the problem that when the refresh rate increases again, the pixel transitions are not as fast and you need to change the setting back!

If the panel is fast enough, and the overdrive has been tuned appropriately and sensibly it is sometimes possible for manufacturers to follow this pattern but for a single mode to still be usable even at the lower refresh rates, just as long as that overshoot doesn’t become too high. For instance models like the LG 27GL850 and 27GN950 were arguably ok even down to the lowest refresh rates at a single setting. Not perfect, but better than most models where it’s normally a split somewhere in the middle of the range before you need to change.

We’ve known some screens to need more than 2 settings across a refresh rate VRR range too! That’s even more of a pain.

As you can see, this can become a problem and it’s not really practical to change the setting during usage of course. You normally end up having to evaluate your frame rate range in a given game, and then select the relevant overdrive mode from there that will give you the best balance. Or refer to reviews where this is tested and recommendations are provided. You might need to do this multiple times for different games and it’s just a complication most people don’t want to have to worry about.

VRR has been around for ages now. What we need in this day and age from all gaming displays is a nice, simple single overdrive setting experience. Something you can “set and forget” as Tim at Hardware Unboxed likes to say.

Gaming displays that make use of NVIDIA’s Native G-sync hardware module benefit from the inclusion of their “variable overdrive” technology. This tunes the overdrive in a complex way across all transitions and refresh rates. In practice this basically turns down the overdrive dynamically as the refresh rate lowers. This means response times slow a bit, but that’s generally fine as they don’t need to be quite as fast to keep up with the reduced frame rate any more. But it’s primary goal is to reduce the overshoot levels and control that problem. This works really well from what we’ve seen, and results in a single overdrive setting experience on Native G-sync screens. One of the reasons why these screens are still very popular.

Some manufacturers have started to invest in R&D to create variable overdrive even on non-Native G-sync screens. For instance Asus now include this on their normal adaptive sync screens and from what we’ve seen so far (e.g. the Asus ROG Swift PG329Q) it seems to work generally well, and is certainly a step in the right direction. We will also be testing this soon on their latest ROG Swift PG32UQ display.

Moving forward, it feels like it’s starting to become unacceptable for any decent gaming display to have a situation where you don’t have a single overdrive setting experience. We’ve always talked about this anyway in our reviews, but we would like to see manufacturers pay more attention to this, and not just focus on a single refresh rate max. The continued use of the NVIDIA G-sync module is a good way to do this, and we still believe that is a useful tool for any gaming display. If it’s an adaptive sync screen, we’d love to see more manufacturers develop usable and effective variable overdrive technology too.

We should give quick mention here to another fairly common trend we see from adaptive-sync gaming screens, usually lower end models. Sometimes you will see that as the refresh rate increases, the response times are slower – which is totally counter-intuitive. For example see the recently tested LG 32GN600. Logic would dictate that as the refresh rate is increased, the response times need to be improved and made faster to keep up, not made slower. This often results in higher refresh rates being unusable as the response times cannot keep up. This is pretty inexcusable and worse than the problem we described above.

Bonus request – VA response times

While we are talking about response times, we would really like to see further improvements in VA panel response times. Transitions from black to grey have long been a problem on this technology, resulting in common “black smearing” on moving content. We saw great improvements with the Samsung Odyssey G7 displays where this had been cleared up, finally making VA a viable gaming option for many people. However, there’s still a widespread issue with response times from VA technology that we’d love to see improved by AU Optronics and other manufacturers of this technology.

2. Either drop HDR 400 certification altogether or re-evaluate the criteria

- Local dimming should be a pre-requisite for a screen to be anywhere near the HDR term

- Wide colour gamut and 10-bit colour depth are also needed

- Stop rewarding lazy manufacturers

Long-time readers will be well aware of our gripes with the HDR 400 certification that VESA have specified, we’ve been vocal about this for a long time. HDR 400 is the entry level tier in the VESA DisplayHDR scheme for desktop monitors and you will see hundreds of displays carry this badge and promote to you just how supposedly brilliant their HDR performance is.

In our opinion the criteria for this certification as just far too lapse and it is widely being abused in the monitor market by manufacturers as a result. HDR has one simple requirement that underpins everything – that is, an improved dynamic range (aka contrast ratio). If the display lacks any ability to improve the dynamic range beyond its native panel performance, it can’t by definition be considered anywhere near the term “HDR” in our opinion. What makes up “true HDR” is another conversation, but having no ability to even attempt to improve the dynamic range is certainly nowhere near. There are some considerations to make here when you compare IPS vs VA panels, but fundamentally to improve the dynamic range, and offer improved HDR performance in the display market you need to be able to dim darker areas of the picture, while raising the brightness in others. This is achieved through “local dimming”, whether that is via the backlight itself by splitting it in to a number of zones, or in the case of OLED panels, through the individual pixel control. The problem is, that this entry-level HDR 400 tier does not require local dimming of any sort, so manufacturers can earn the badge even where their display can’t technically do any kind of local dimmng! And no, we don’t consider “global dimming” of any use here, that’s just a glorified name for dynamic contrast ratio and doesn’t do anything to improve the dynamic range of the viewed image.

While improving the dynamic range is a fundamental part of HDR, the other key element that is widely associated with this content is colour performance. For HDR content you need a colour gamut (typically >90% DCI-P3 coverage) from your screen that is wider than SDR which conforms only to the common sRGB colour space. Colour improvements with more vivid and bright colours is a key part of the HDR content experience, and so you need a screen that can offer a wide colour gamut. Likewise you want the screen to be able to handle 10-bit colour depth processing for smooth colour gradients and to process all those colours properly. Our other issue with the HDR 400 standard is that it requires neither of those things to earn the certification badge!

So in theory (and you will find many of these screens) you can have a display that can simply accept an HDR10 input signal, and sure it can then do appropriate tone mapping and will have a slightly improved brightness (400 nits+), but it has none of the hardware requirements to actually create anywhere near an HDR image. It could have no local dimming and so cannot physically improve the dynamic range. It has a standard sRGB gamut colour space only, and an 8-bit colour depth. And yet it can still receive the HDR 400 badge! This is a farce.

Some manufacturers may offer some of these enhancements off their own back, even though they didn’t need to in order to get the HDR 400 badge. Plenty of modern screens will have wide colour gamut and 10-bit colour depth, that is thankfully quite widespread now. Although not all of them will and the consumer still needs to try and figure that out. A few panel and display manufacturers have even started, to their credit, adding some form of local dimming to their HDR 400 type options to try and improve the backlight control and boost the overall image dynamic range (e.g. the recently tested Gigabyte Aorus FI32U). This is a step in the right direction. Obviously for truly top end HDR you ideally need a lot more dimming zones, a higher peak brightness etc. But our main issue here is the use of the HDR 400 badge, that implies HDR performance, when in reality there is nothing in the performance criteria that requires anything close to HDR enhancements. You have a massive range of capabilities between a wide range of displays all carrying this badge and it makes life very confusing for the consumer.

This has been going on for too long in the monitor market. We would really love to see VESA step in here and be at the forefront of driving market improvements. They should either drop this tier altogether, or re-invent it to tighten up the criteria properly. The criteria used for the higher HDR 500, HDR 600 and above tiers is much better, requiring backlight local dimming, wide colour gamut and 10-bit colour depth as standard. So why is the HDR 400 tier any different? What is its purpose other than allowing manufacturers to promote an HDR badge with the VESA name on it, even though frankly it’s giving the whole thing a bad name? Tighten up those criteria and manufacturers will be forced to up their game and improve their display HDR capabilities. That can only be a good thing for the consumer. Let’s stop rewarding lazy attempts at HDR with a badge that many consumers won’t realise doesn’t mean much, and instead use it as a means to improve the HDR market.

1. More realistic G2G response time figures, without chasing marketing numbers

- Provide more realistic specs and stop chasing misleading numbers

- Get rid of overly aggressive overdrive modes and settings that are unusable

This one has been a problem in the monitor market since LCD displays first started to appear. The constant chasing of numbers in specs, which normally don’t translate back to real life experience or actual measurements. It’s always been the case that the G2G (grey to grey) response time figure quoted is the “best case” measurement, and the average across a wide range of measured transitions may well vary and be slower. Consumers can read reviews from sites who measure response times in more detail to get a more accurate picture. This “best case” stretches the credibility of a paper spec for response time somewhat although it’s probably fair to say the market understands that quite well and can take that in to consideration when buying. The problem though is that this has started to become even more stretched, as manufacturers chase the latest and greatest G2G figures.

What we are now seeing is manufacturers implementing overdrive modes on displays that are deliberately over the top, and simply unusable. They are pushing the overdrive impulse to the maximum, driving down G2G figures so that they can quote 1ms, or even specs like 0.5ms that you will see today in some cases. While this will drive down the G2G figure, it doesn’t tell the full story, and they fail to explain that to get there, the overshoot artefacts in motion become a major problem.

The overdrive is applied so aggressively that overshoot appears with major pale or dark halos (or both) on moving content. Yes, they’ve achieved the marketed G2G figure, but its at the cost of major issues. This makes the mode entirely unusable in most cases and makes the whole thing pointless. All too often we see displays which have several sensible and usable overdrive modes that clearly the manufacturer intends you to use, then a maximum mode which is just so drastically different, it is clearly there for just marketing purposes. No one is ever going to use it, but they can reach 1ms (actually not always!) and put that on their spec page.

We would really like to see this trend stop. A response time figure needs to be relevant to a mode that can actually be used. Trying to cover it by being clever in market specs and saying “1ms G2G (maximum overdrive mode)” or similar doesn’t help. They might as well be saying “1ms G2G that you can’t use”.

We would much rather manufacturers quote realistic and achievable specs for modes that the buyer can use, and just remove those pointless maximum modes completely. We think most people would have more respect for a product that has realistic specs, based on settings they can actually use, than specs that have no relevant to what is usable.

If a manufacturer wants to quote 1ms G2G and be at the front of that race to the latest specs, they should have to earn it through proper R&D, new panels and a focus on overdrive control and behaviour. Not fudge their way there to a spec by creating something no one can ever use.

We may earn a commission if you purchase from our affiliate links in this article- TFTCentral is a participant in the Amazon Services LLC Associates Programme, an affiliate advertising programme designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com, Amazon.co.uk, Amazon.de, Amazon.ca and other Amazon stores worldwide. We also participate in a similar scheme for Overclockers.co.uk, Newegg, Bestbuy , B&H and some manufacturers.

Stay up to date

Our latest News Round-up video